AI Review Agent automatically corrects errors in translations, providing a second layer of quality assurance before human review. It improves translation quality by identifying and fixing issues while enforcing your organization’s style guidelines.Documentation Index

Fetch the complete documentation index at: https://support.lilt.com/llms.txt

Use this file to discover all available pages before exploring further.

Scope

AI Review checks:- Style guide compliance (except formatting and alignment)

- Termbase terminology validation

- Critical, Major, and Minor errors

- Segments with “No error” status

- Exact matches (100%) and In-context exact matches (101%)

Enabling AI Review

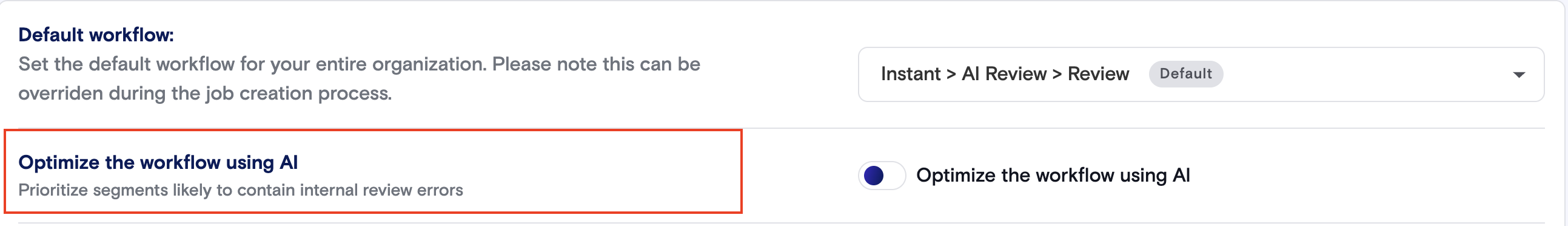

Set your default workflow to AI Review in LILT Settings by selecting one of the below options at the organization or domain level:- Instant > AI Review > Review

- Instant > AI Review > Review > Secondary Review

Model Learning

All reviewed and accepted segments are saved to your selected model, continuing the fine-tuning of your custom models.AI Provider

The AI Provider for AI Review is configured in AI Providers. The default is LILT Contextual AI. However, AI Review can be configured to use Anthropic Claude as an alternative provider.Supported Languages

AI Review is available for these language pairs:| Source language | Target language |

|---|---|

| English | German, French, Spanish, Japanese, Korean, Portuguese, Chinese (Simplified), Chinese (Traditional), Polish, Vietnamese, Turkish, Norwegian, Finnish, Swahili, Russian, Swedish, Italian, Ukrainian, Indonesian, Dutch, Danish, Hindi, Thai, Malay, Arabic, Romanian, Czech, Hungarian |

| German | English |

| French | English |

| Spanish | English |

| Chinese (ZH) | English |

- Unsupported language pairs default to Instant > Review workflow and skip the AI Review stage.

- Jobs with languages that lack MT support cannot be submitted.

Linguist Interface

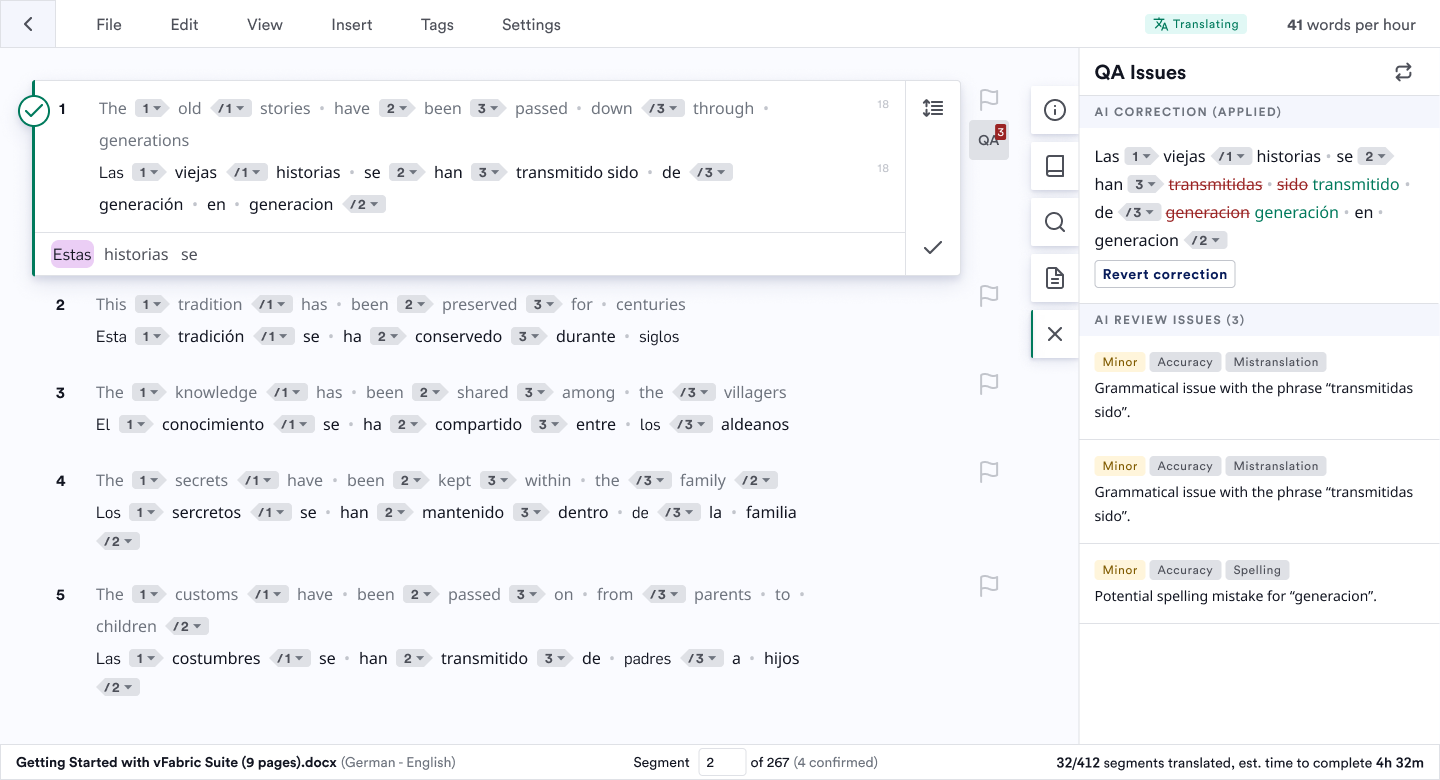

Viewing AI Review Corrections

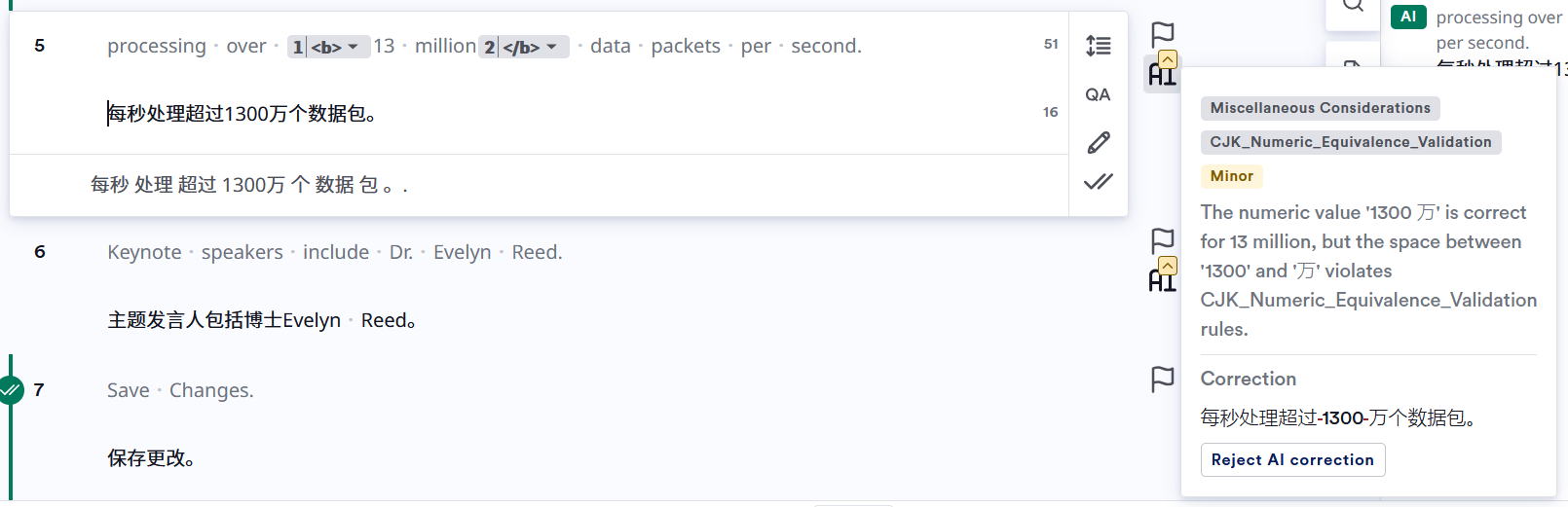

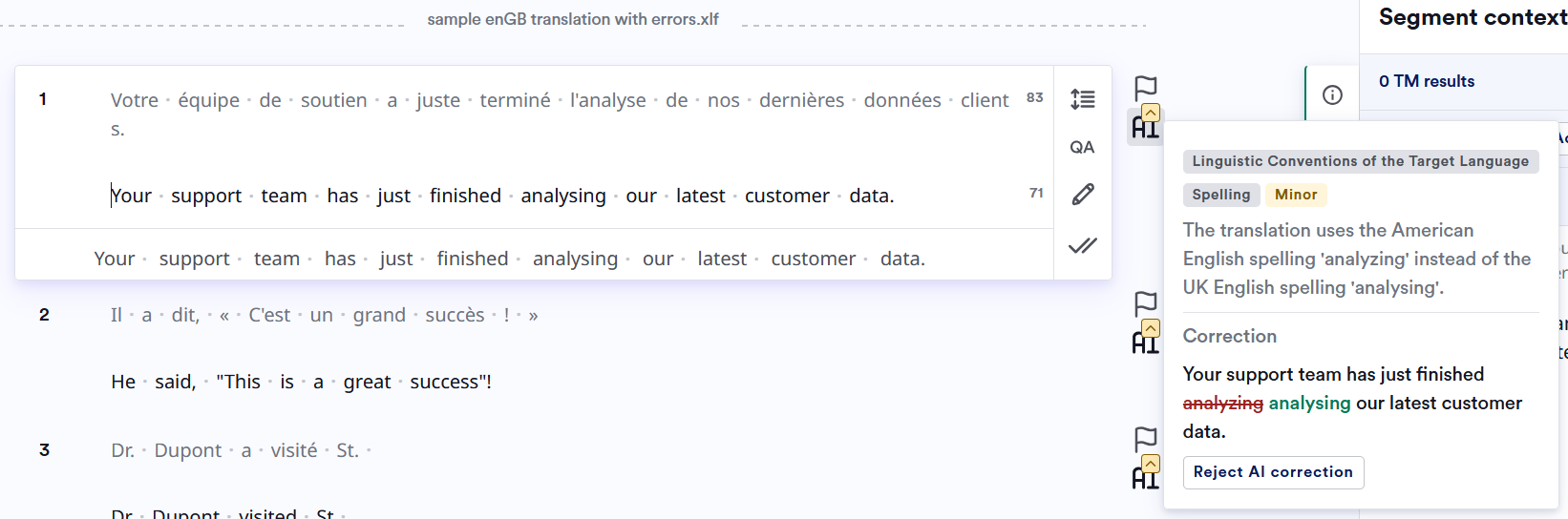

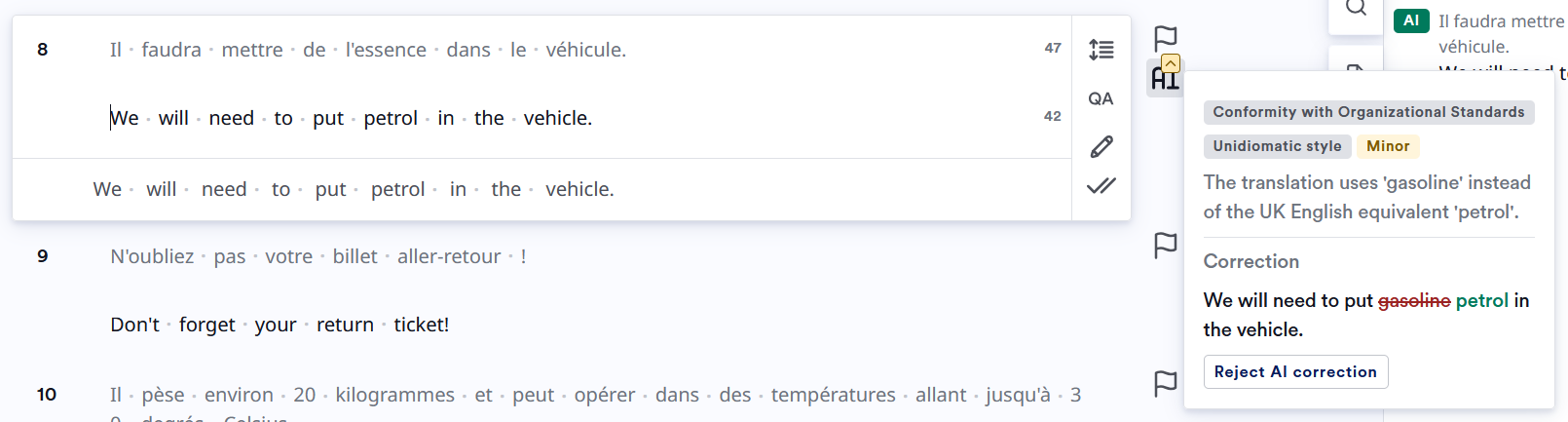

AI Review results appear in the CAT sidebar. Changes made by AI Review are shown with struck-through text (removed) in red and added text in green. Issues found are categorized by severity, category, and sub-category. You must accept segments that have been corrected by AI Review. This also reprojects any tags to ensure correct formatting.

Rejecting AI Review Corrections

You can reject AI Review changes to revert the text to its original state before you accept the segment. To reject the changes, click on “Revert correction”. At this point, this will be logged as an error and will appear under the error logging flag.Revision Reports

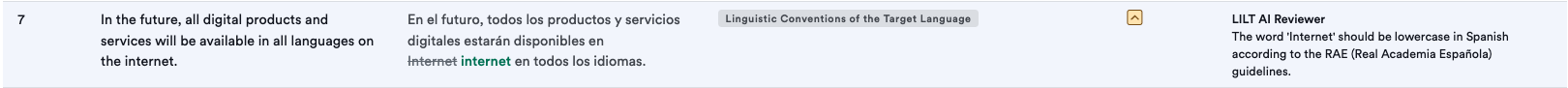

Revision reports show AI Review corrections alongside reviewer changes.

- Error category and severity

- “LILT AI Reviewer” in the Comments column

- “Yes” in the Reviewer changes? column

- AI Review error rate percentage (calculated over 80% of segments processed)

- All AI Review changes marked with “LILT AI Reviewer”

Custom Style Guides

AI Review enforces custom style guides to maintain consistency across translations. Style guides control spelling, punctuation, vocabulary, date/time formats, and number formats.Ask a linguist to verify the rules and AI review results before deployment.

Writing Effective Rules

Use explicit, prescriptive commands- Write “Replace X with Y” or “Use X instead of Y”

- Avoid “Consider using X” or “X might be preferred”

- Group related rules (spelling, punctuation, terminology)

- Place most important requirements at the top

- Provide 2-3 concrete examples per rule

- State critical requirements in multiple rule contexts

- Repetition improves adherence given AI variability

- Example: If preserving variables is critical, repeat this in every category

- Use explicit language ‘gates’ and prescriptive commands

- Start each rule with a clear language gate. Keep gates consistent across the guide.

-

Use full locale when norms differ:

pt-BR only:…vspt-PT only: … - Avoid mixed-language rules; only one language per rule. Do not define overlapping rules for the same language in multiple sections.

-

Examples:

- Don’t specify spacing, line breaks, or text alignment

- Don’t control HTML/XML tag structure

- AI Review cannot process document-level formatting context

- Instruct AI Review to never modify

{variables},<tags>, or[placeholders] - Repeat this constraint in multiple rule categories

Rule Categories

Structure your style guide with these categories: 1. Spelling StandardizationExamples